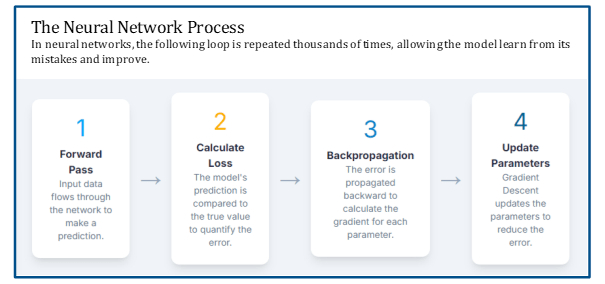

Step 1: Forward Pass

- network makes a guess

- it takes the input, processes it through each layer with its current set of weights and biases, & produces an output

Step 2: Calculate the Error (Loss/Cost Function)

- network compares the output to the correct answer (supervised learning)

- difference between network’s guess and the correct answer is called the ‘loss’ or ‘error’

Step 3: Backward Pass (Back-propagation)

- network uses this error to figure out how much individual weight and bias contributed to the mistake

- it essentially propagates the error backward through the network, from the output layer all the way to the input layer

Step 4: Update Weights

- based on the network’s calculations, the weights and biases are adjusted to reduce the error

- the goal: the next time the network sees this input, it will make a better guess

One response to “Neural Network Process”

[…] Back-propagation is a computational method that computes the gradient of the loss function in neural networks. This is the third step in the neural network process. […]

LikeLike